| Client: | IBM |

| Dates: | Jan–Oct 2017 |

| Skills/Subjects: | business, ibm, information architecture, information visualization, interaction design, management, professional, prototyping, research, UI design, usability, Usability testing, UX, web app |

| URL: | https://www.ibm.com/blogs/bluemix/2017/12/beta-release-new-vsi-monitoring-tool-ibm-cloud/ |

Although I was primarily a researcher at the time, I was also hired as a hybrid designer/researcher with a strong development and computer science background. My manager paired me as the design lead with offering manager Steve Harrington to build a new server monitoring service to replace what IBM-acquired company Softlayer had in place at the time. Our goal was to not just make a good service but to turn around an offering that was losing a significant amount of money annually.

Throughout conception and development, I worked very closely with Steve to ensure our service was defined as closely to our users’ needs as it was to our business needs. Over time we worked through many research and offering questions and assembled a team of about a dozen, along with dozens more stakeholders, to prepare a beta launch in October 2017.

Research

Early on, I was only vaguely familiar with system administration from my own experience doing such tasks in previous companies and personal projects. None of these were of the scale and proficiency of an enterprise sysadmin, prompting me to gather all the data and artifacts I could find or conduct myself (mostly the latter). I organized these artifacts by four categories:

- offering: market data from IMS and business stakeholders, hills and user stories, and competitive analyses

- behavioral: Google Analytics, Intercom, and Amplitude data from actual users on the as-is and related offerings

- evaluative: NPS, usability testing, structured and semi-structured interviews, expert heuristic evaluation

- formative: interviews, surveys, academic research, professional publications, blogs, social media

These enabled me to create initial artifacts for who, what, and why Softlayer’s existing monitoring service was used. From this point I crafted a rough research plan with Steve, starting with defining key metrics for the offering as-is and to-be in order to meaningfully understand the impact our work would have. Steve worked with his offering leader and others to best understand the business area, while I worked with the IBM Cloud research community to discover leads on users of monitoring services.

My colleagues from the nearby Hybrid Cloud teams were most helpful in identifying and introducing me to potential sponsor users. I also worked through my Softlayer marketing and customer support contacts to make long-lasting relationships with the folks who perhaps know our customers the best.

With these folks available, I used snowball sampling to build my repository of subject matter experts, customers, and users to provide a general understanding of IBM’s niche in the market. Using mixed methods including interviews, surveys via email and Intercom, and a design research jam at the Softlayer office in Dallas, Steve and I compiled a strong starting point for human and domain research to begin prototyping. This continued in a similar fashion for the following months in conjunction with my research for other Infrastructure services, eventually turning to more evaluative and incremental research to directly inform design while still informing our general domain knowledge.

We synthesized this data for use by our internal stakeholders as hills and user stories, target groups and stakeholders, and jobs to be done (these are IBM-confidential).

Design

As the design lead, I began lo-fi prototyping small changes to the as-is experience from standard heuristics and methods, using my expert evaluation guide. This helped us in finding a general direction to take the new offering based on feedback from these lo-fi experiments.

As we gathered more research and technical capabilities communicated via our recently partnered Logmet team, I started prototyping in higher fidelity using Sketch. I built from the same lofi Sketch prototype to iterate on the design and seek more internal stakeholders for feedback and to join our team. Much of my early design work was discovering and reconciling design efforts from other IBM teams and internal Softlayer monitoring teams (there were many). Managing these design boundaries was important to defining our internal competitiveness and fit within proper workflows.

Steve and my design team were both the first to review iterations for interaction and UX critique and competitive advantages over other services. Working in two-week sprints, we also involved other stakeholders within IBM to discuss this design and how it might integrate with other services to consider our long-term roadmap. Most of our work was integrated with my teammate Megan Baxter’s work for Cloud’s next-generation project Genesis beta in late 2017. We continued working together to ensure a co-developed experience that would yield transferable insights from testing and iterations on both the Softlayer and next-generation UIs.

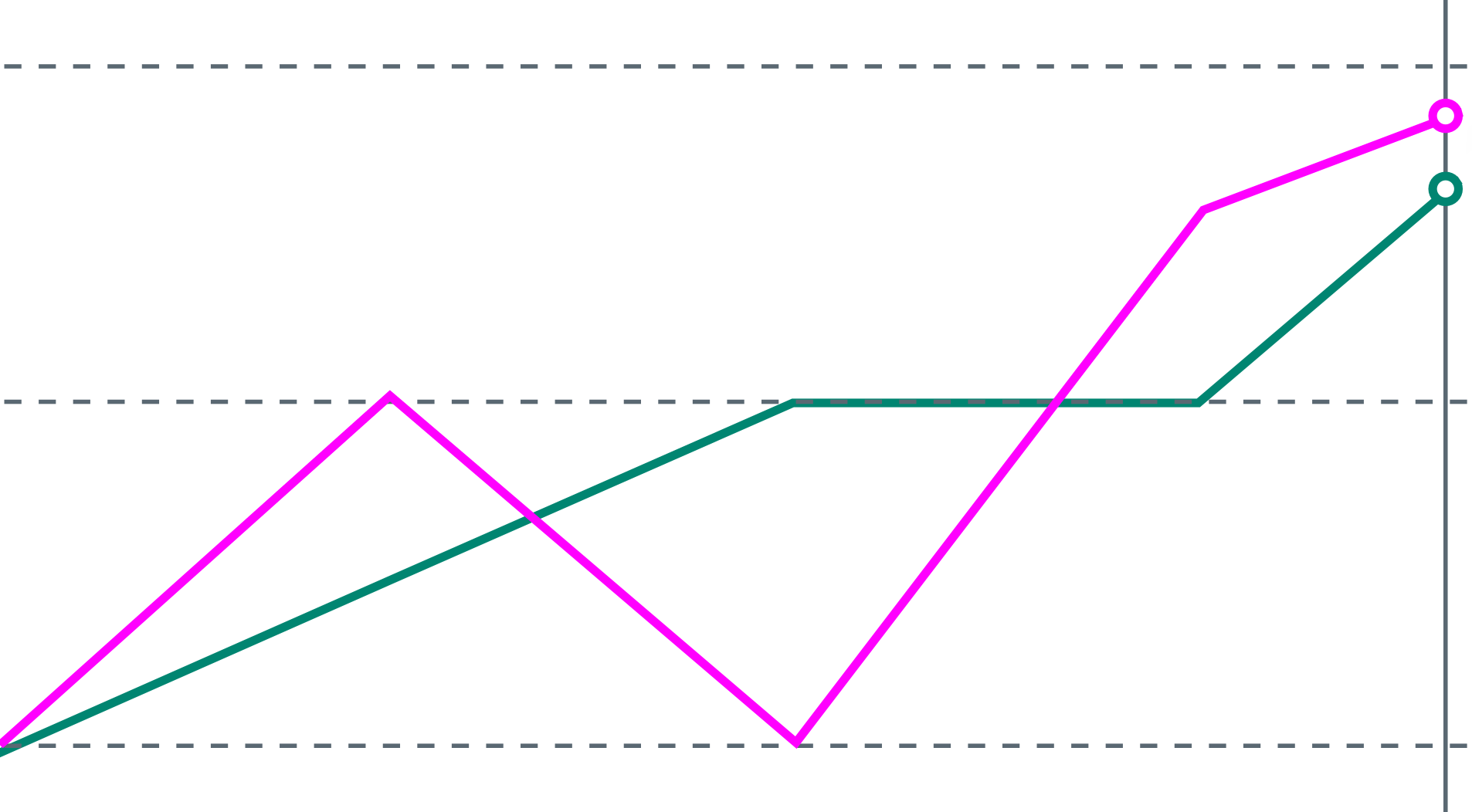

Before the late 2017 launch, we created interim design and offering specifications that aligned with our co-created roadmap. At each development and design step, we did experience-based QA testing and usability testing as conditions allowed, each demonstrating a higher NPS score and positive progress toward our design goals.